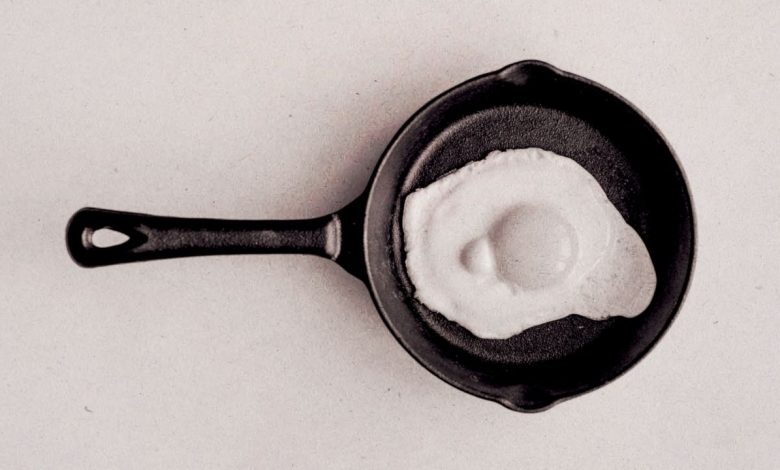

This is your brain on ChatGPT: MIT shows you, AI makes you dumb

Sizzle. Sizzle. That’s the sound of your neurons frying the heat of a thousand GPUs as your AI productivity tool of choice merrily whirs through your work. As it turns out, releasing all that effort to understand the robot as you watch the fun turns your mind into a couch potato.

That’s what a recently published (still peer-reviewed) paper from some of MIT’s brightest minds suggests, anyway.

Research examines the “emotional and behavioral effects” of using LLMs (Large Language Models) as ChatGPT, in this case, is writing a story. The findings raise serious questions about how long-term use of AI may affect learning, thinking, and memory. Worryingly, we just saw it play out in real life.

Google DeepMind, you EmptyMind

The study, titled, “Your Brain on ChatGPT: Accumulating Cognitive Credit When Using an AI Assistant for Essay Writing,” involving 54 participants divided into three groups:

- LLM Group: You are instructed to complete the assignments using only ChatGPT, and no other websites or tools.

- Search engine group: Allowed to use any website except LLMs, even AI-enhanced answers were prohibited.

- Mind group only: Relying on their knowledge only.

In each of the three sessions, the groups were given the task of writing an essay on one of three changing topics. An example of an essay question for the topic “Art” was: “Do works of art have the power to change people’s lives?”

Participants had 20 minutes to answer a question related to their chosen topic in the form of an essay, all while wearing an Enobio headset to collect EEG signals from their brains.

In the fourth session, only the LLM and Brain groups were changed to balance any potential effect of previous sessions.

The results? Across the first three tests, the brain-only writers had the most active, widespread brain activity during the task, while the LLM-assisted writers showed much lower levels of brain activity across the board (although they generally completed the task faster). Search engine-assisted users often fall somewhere between the two.

In short, only brainy writers were engaging with the assignment, producing creative and unique writing while actually studying. They are able to cite their essays afterwards and feel a strong sense of ownership of their work.

Alternatively, LLM users engaged less each time, began to rely more uncritically on ChatGPT as the study progressed, and felt less ownership of the results. Their work was judged to be very different, and participants often failed to quote accurately from their work, suggesting a reduced long-term memory structure.

The researchers called this phenomenon “metacognitive laziness” – not just a good name for a Prog-Rock band, but also an appropriate label for the dark distance between autopilot and copilot, where participants abandon themselves and allow AI to do the thinking for them.

But it was the fourth session that produced the most worrying results. According to the study, when the LLM and Brain-only group traded places, the group that relied on AI failed to return to the pre-LLM levels tested before the study.

TL; DR: AI is still making us stupid, but we didn’t need a study to prove it

To put it simply, the continued use of AI tools like ChatGPT to “assist” with tasks that require critical thinking, creativity, and mental engagement may destroy our natural ability to access those processes in the future.

But we didn’t need a 206-page study to tell us that.

On June 10, an outage lasting more than 10 hours was observed ChatGPT users are disconnected from their AI assistant, and it sparked a disturbing trend of people openly admitting, without awareness, that without access to the OpenAI chatbot, they simply forget how to work, write, or work.

How it feels like coding without chatgpt ChatGPT is down pic.twitter.com/KEThaV0QU9January 23, 2025

This study may have used EEG caps and grading algorithms to prove it, but most of us are probably already living what it found.

When faced with an easy or difficult path, many of us thought that only a smooth-brained person could take the most difficult, difficult route.

However, as this study suggests, the so-called easy way may be to silence our front-ends permanently – at least when it comes to our use of AI.

This is how I feel when Chat GPT is down: #ChatGPT pic.twitter.com/Ne1pslXFk7June 10, 2025

This is especially alarming when you think about students, who use these tools in large numbers, and OpenAI itself is pushing for the wider adoption of ChatGPT in education as part of its goal of building an “AI-Ready Workforce.”

A 2023 study by Intelligent.com revealed that a third of US college students surveyed used ChatGPT for schoolwork during the 2022/23 academic year.

By 2024, a survey from the Digital Education Council said that 86% of students in 16 countries are using artificial intelligence in their studies to some extent.

The big sell of AI is productivity, the promise that we can do more, faster. And yes, MIT researchers once concluded that AI tools can increase employee productivity by up to 15%, but the long-term impact suggests trust over intelligence. And that feels like a setback.

At least in one before of the computer.

Sizzle. Sizzle.