AI chatbots with web browsing can be abused as malware transmissions

AI chatbots with web browsing can be abused as malware transmissions, based on a Checkpoint Research demo. Instead of the malware calling home to a regular command server, it can use the chatbot URL fetcher to pull commands from the malicious page, then return the response to the infected machine.

In many places, traffic to large AI environments is already handled as normal, which would allow command and control to flow through to normal web usage. The same method can also be used to extract data.

Microsoft addressed the issue in a statement and framed it as a post-compromise communications issue. It said that if a device is vulnerable, attackers will try to use any available services, including AI-based ones, and called for deep security controls to prevent infection and reduce what happens afterwards.

The demo turns a conversation into a referral

The concept is straightforward. The malware prompts web AI to load a URL, shorten what it finds, and scrape the returned text for embedded instructions.

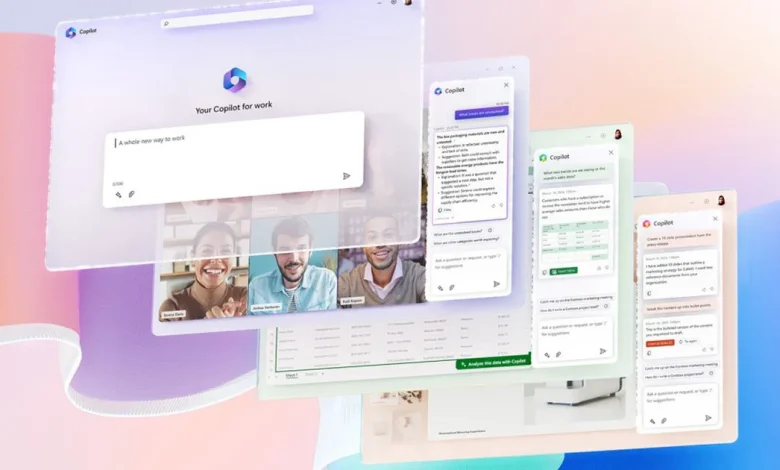

Check Point said it tested how Grok and Microsoft Copilot used their internet. Important details to access, the flow is designed to avoid developer APIs, and in tested cases it can work without an API key, reducing friction for abuse.

For data theft, the methodology can work in reverse. Another approach that has been revealed is to put data into URL query parameters, and then rely on an AI-triggered request to deliver it to the adversary’s infrastructure. Basic encoding can further obscure what is being sent, making simple filtering of content less reliable.

Why is it hard to see

This is not a new category of malware. It is a common pattern of control and management wrapped in a service that many companies enable. If AI-enabled browsing services are left on by default, an infected system can try to hide behind seemingly less dangerous domains.

Check Point also highlights how common pipes are. Its example uses WebView2 as part of the embedded browser on modern Windows machines. In the defined workflow, the program collects basic host information, opens a hidden web view to the AI service, triggers a URL request, and passes the response to execute the next command. That might be normal behavior of the app, not an obvious lightbulb.

What should be done by security teams

Treat web-enabled chatbots like any other highly-trusted cloud application that cannot be abused after being compromised. If allowed, monitor for patterns of automation, repetitive URL loading, unusual bounces, or traffic volumes inconsistent with human usage.

AI browsing features may be available on devices managed by certain roles, not all devices. The open question is the scale, this is a demo and does not measure success rates against stronger ships. What you will be watching next is whether providers add robust automated detection to web chat, and whether defenders begin to treat AI environments as potential channels of compromise.