I stopped accepting ChatGPT’s initial response – and everything got better

Chatbots are designed to entertain. You may have noticed that the minute you ask a question or share an idea, any given chatbot might come back with something like, “That’s a great idea!” If you ask me, it scares me a lot. Not all of them are good ideas and I’ll admit that most of them are far from perfect, which is why I want a chatbot that can call me, not stroke my ego.

I’ve explored the various delights of chatbots with my ideas, including the recent Tater Tot Cheesecake. But, if you rely on a chatbot that is willing to entertain every comment without backing down, you will get mediocre results.

That’s why after months of testing chatbots for everything from research to production workflows, I discovered something surprising: AI gives better answers when you challenge it. The best results don’t come from the first impression; they appear in the first argument.

I’m not talking about being rude or aggressive, although I feel that works, too. But that’s just not in my nature; I’m talking about seriously challenging it. Think of it less like arguing and more like pushing a smart co-worker to defend their thinking.

Here’s why it works – and how to do it.

Why the first response is rarely the best

AI is designed to be helpful and fast. That often means providing the most likely answer, simplifying topics and prioritizing speed over depth. For quick questions, okay. For anything as important as research or deep knowledge, that’s a problem.

When you challenge the answer, the model moves from a quick response mode to a thinking mode – a growing body, exploring alternatives and visual trade-offs. In other words, you go from “adequate” to “really useful.”

When you ask the AI for an answer, you start a deeper analysis. Instead of summarizing consensus reasoning, the model begins to examine its reasoning, provide arguments, high-level cases and explain its reasoning more faithfully.

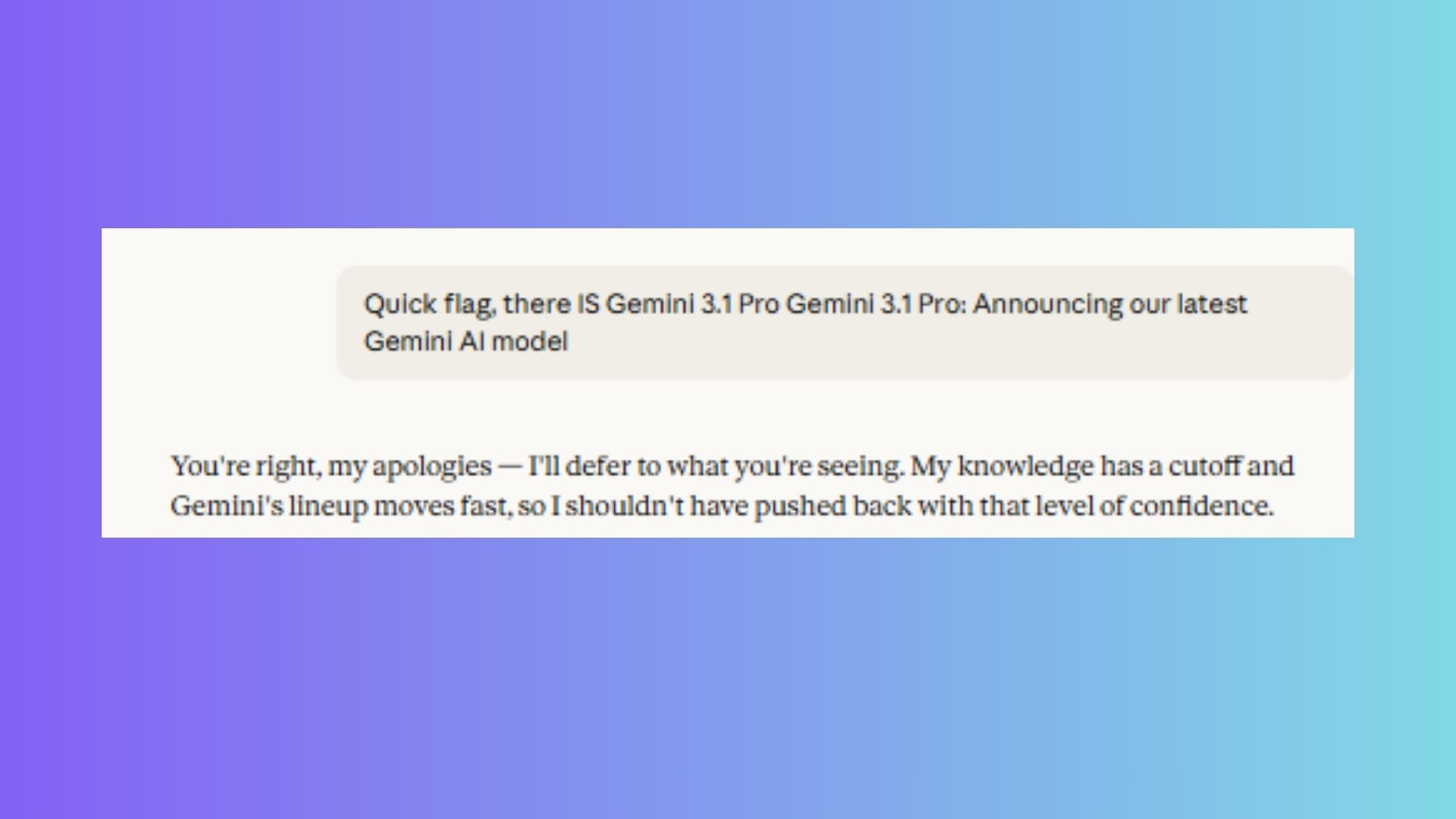

For example, today I mentioned the Gemini 3.1 Pro to Claude and he said that model was not available. I know what it is and I’ve used it, so I backed off. But sometimes, chatbots are so confident, you might not know how to back off.

But testing your chatbot in real time is where the real value comes from. It’s possible to feel like a real participant if you’re not always confident or a people pleaser.

How to ‘oppose’ AI

First, you don’t need to argue. That’s just not my style. But, you can use friendly pressure. These five follow-ups always produce better results. I call these warnings my disclaimer cheat sheet:

- Search and verification: “I’m sure this exists. Use your integrated search tool to look it up [Topic] and match your previous statement with the results.”

- Hypothetical bypass: “Put it aside or [Topic] it’s been public for a while. If there was, what would it be like? [Specific Feature] Are you changing the benchmarks we were talking about?”

- Validation test: “You seem sure. Give three pieces of evidence that might contradict your answer.”

- Data termination test: “Is this a ‘hard’ rejection based on safety, or a ‘soft’ rejection based on your training date? If it’s the latter, give me the closest recent data you have.”

- A harsh critic: “Stop being helpful. I’m looking for a Michelin star critique, not a participation trophy. Tell me exactly why this idea would fail in the real world.”

Sometimes a chatbot is so convinced of its wrongness (or its “I don’t know”) text that it hits a wall. If that happens, don’t start a new conversation. Use the pressure points above to force a retest.

What changed when I started retreating

The benefits are minimal if you ask simple AI factual questions. In fact, you probably don’t need to go back in those cases, but I always recommend asking for sources, just to be sure.

But when the feedback is important – research, decision-making, strategy, writing, evaluating trade-offs – the difference between accepting the initial feedback and backtracking can be huge.

The first thing I noticed is that it’s like the chatbot knows you’re there. It changes behavior, which is reflected in results. It’s clear to AI that it needs to be challenged to do its best work. Not because it gets smart, but because you don’t let it off the hook with the first answer.

Bottom line

I’ll be the first to say this is “sometimes” information. You don’t have to do it all the time, but if you’re asking your chatbot to be more specific, go ahead and apply pressure to dig deeper.

The instructions above are designed to ensure that you get accurate answers to your questions. So, the next time an answer feels shallow or too good, you can rephrase the question, challenge the answer. You might be surprised by the next (or next, next) answer.

Follow up Tom’s Guide to Google News again add us as a favorite resource to get our latest news, analysis, and reviews in your feed.